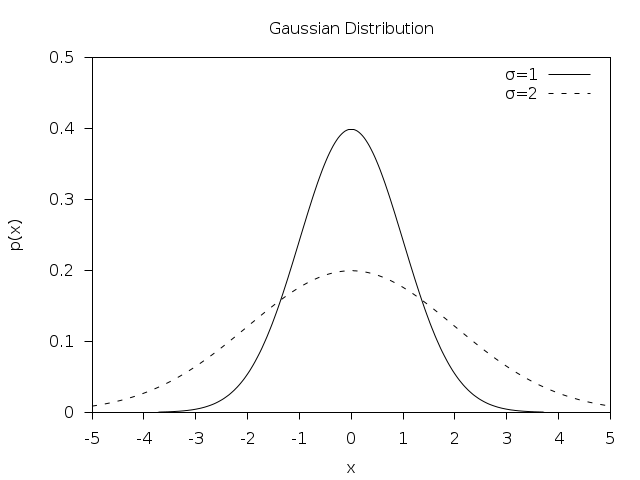

Selecting the covariance function is the model selection process in the GP learning phase. GPs gain a lot of their predictive power by selecting the right covariance/kernel function. To make this procedure more robust, you can rerun your optimization algorithm with different initializations and pick the lowest/highest return value.Ĭovariance Functions - The heart of the GP model The Gaussian distribution is based on two parameters: the mean of the distribution, and the standard deviation of the. Note that the marginal likelihood is not a convex function in its parameters and the solution is most likely a local minima / maxima. For example, if the mean of a normal distribution is five and the standard deviation is two, the value 11 is three standard deviations above (or to the. Once you have the marginal likelihood and its derivatives you can use any out-of-the-box solver such as (stochastic) Gradient descent, or conjugate gradient descent (Caution: minimize negative log marginal likelihood). We first review the definition and properties of Gaussian distribution:Ī Gaussian random variable $X\sim \mathcal$ by maximizing the marginal likelihood $P(y \mid X, \theta)$.Ĭf. and when you decrease the value you will get more details.Properties of Multivariate Gaussian Distributions when you increase the sigma you are looking to the broad scene without paying attention to the details exits. you see more details that previously you didn't. but the details are not clear! Now see a specific point in that seen. Since subpopulation assignment is not known, this constitutes a form of unsupervised learning. Mixture models in general don't require knowing which subpopulation a data point belongs to, allowing the model to learn the subpopulations automatically. The ,2, 2 in given side means that you can treat them as known quantities. In probability theory and statistics, the multivariate normal distribution, multivariate Gaussian distribution, or joint normal distribution is a generalization of the one-dimensional ( univariate) normal distribution to higher dimensions. see for example take a look at a broad scene! don't pay attention to a specific point! so you see a broad scene with lots things in it. Gaussian mixture models are a probabilistic model for representing normally distributed subpopulations within an overall population. Here, it means the normal PDF: N(x,2) 1 22 e(x)2/22 N ( x, 2) 1 2 2 e ( x ) 2 / 2 2. In the sense of natural image statistics! The scientists in this field of studies showed that our vision system is a kind of Gaussian filter in the responses to the images. The three percentages are often called benchmarks for normally distributed data: 68 within 1 standard deviation, 95 within 2 standard deviations, and 99.73. So the more weights will be focused in the center and the less around it. In the quantization step, as the Gaussian filter(GF) has a small sigma it has the steepest pick. So we have to quantize our Gaussian filter in order to make a Gaussian kernel. But as you know this happen in the discrete domain(image pixels). Now putting all together! When we apply a Gaussian filter to an image, we are doing a low pass filtering. The Kaniadakis -Gaussian distribution is a generalization of the Gaussian distribution which arises from the Kaniadakis statistics, being one of the Kaniadakis distributions. The so-called 'standard normal distribution' is given by taking and in a general normal distribution. Means that we apply a kernel on every pixel in the image. The normal distribution is implemented in the Wolfram Language as NormalDistribution mu, sigma. Filtering in the spatial domain is done through convolution.Its bell-shaped curve is dependent on, the mean, and, the standard deviation ( 2 being the variance).

So as the Sigma becomes larger the more variance allowed around mean and as the Sigma becomes smaller the less variance allowed around mean. In a distribution, full width at half maximum ( FWHM) is the difference between the two values of the independent variable at which the dependent variable is equal to half of its maximum value. The Gaussian distribution, (also known as the Normal distribution) is a probability distribution. The two curves below have the same mean, but the curve on the right has a higher standard deviation since it is a wider curve. The role of sigma in the Gaussian filter is to control the variationĪround its mean value.So when we look at the image in the frequency domain the highest frequencies happen in the edges(places that there is a high change in intensity and each intensity value corresponds to a specific visible frequency). Low pass filters as their names imply pass low frequencies - keeping low frequencies. I think it should be done in the following steps, first from the signal processing point of view:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed